The technological revolution of the 21st century has brought about a plethora of new information technologies. Now, more than ever, we are witnessing a rapid advancement in technology that has not only made our lives easier but has also changed the way we communicate, work, and even think. This article will explore some of the latest advancements in new information technologies.

Artificial Intelligence (AI)

Artificial Intelligence, commonly referred to as AI, has been a hot topic in the tech world for the past few years. With the ability to process large amounts of data quickly and efficiently, AI is transforming industries across the board, from healthcare to finance, marketing, and beyond.

AI refers to the capability of a machine or computer program to mimic human thought processes and execute tasks that generally require human intelligence. This includes tasks such as learning, reasoning, problem-solving, perception, and even language translation.

AI models are now capable of predictive analysis, enabling businesses to anticipate customer needs and provide personalized solutions. In healthcare, AI is being used to predict health trends and help diagnose diseases faster and more accurately. In the financial sector, AI has revolutionized risk management and fraud detection by analyzing patterns and trends.

Machine Learning (ML)

Machine Learning, a subset of AI, is a technology that allows computers to learn from data and improve their performance without being explicitly programmed. It involves the development of algorithms that can learn from and make predictions or decisions based on data.

In a nutshell, Machine Learning enables computers to learn from past data or experiences and make predictions about the future. This technology is used in a variety of applications, from recommendation systems on e-commerce websites to voice recognition systems in smartphones and self-driving cars.

Machine learning has also significantly impacted the healthcare industry. For instance, ML algorithms can predict disease progression and assist in developing personalized treatment plans. It’s also used in predicting stock market trends, enhancing customer service, and improving cybersecurity.

Internet of Things (IoT)

The Internet of Things is another emerging technology that is transforming our lives. IoT refers to the network of physical devices, vehicles, home appliances, and other items embedded with sensors, software, and network connectivity that enables these objects to connect and exchange data.

In essence, IoT is all about connecting devices over the internet, letting them communicate with us, applications, and each other. It has varied applications, from smart homes and wearables to smart cities and industries.

For example, with a smart home system, you can control your home’s lighting, heating, and electronic devices with your smartphone. In industries, IoT can be used to monitor equipment and increase efficiency and safety.

Augmented Reality (AR) and Virtual Reality (VR)

Augmented Reality (AR) and Virtual Reality (VR) are two technologies that have been making waves in the tech industry. While both technologies provide immersive experiences, they do so in different ways.

AR adds digital elements to a live view often by using the camera on a smartphone. For example, Snapchat lenses and the game Pokemon Go are both AR applications. On the other hand, VR implies a complete immersion experience that shuts out the physical world. Using VR devices such as Oculus Rift, a user can be transported into a number of real-world and imagined environments.

These technologies have wide-ranging applications, from gaming and entertainment to education, training, and even healthcare. For instance, AR can help surgeons visualize the patient’s anatomy in 3D during surgery, potentially increasing precision. VR, on the other hand, is being used to treat conditions like post-traumatic stress disorder (PTSD) through exposure therapy.

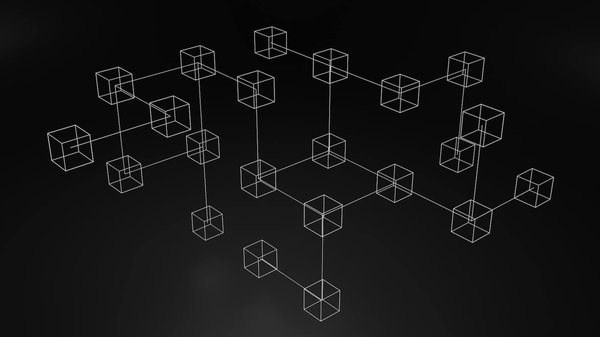

Blockchain Technology

Last but not least, we have blockchain technology. Originally devised for the digital currency, Bitcoin, blockchain has found other potential uses that go beyond cryptocurrency.

Blockchain is, in the simplest of terms, a time-stamped series of immutable record of data that is managed by a cluster of computers not owned by any single entity. It’s the definition of a democratic system, as it’s a transparent, incorruptible digital ledger of economic transactions that can be programmed to record not just financial transactions but virtually everything of value.

This technology is already showing promise in areas such as supply chain management, healthcare records, and voting systems, to name just a few. Its potential to ensure security and transparency in transactions could revolutionize various sectors, including finance, healthcare, and governance.

Cybersecurity and Information Technologies

As advancements in information technologies continue to reshape industries and daily lives, cybersecurity’s role becomes even more critical. Cybersecurity is the field focused on protecting internet-connected systems, including hardware, software, and data, from digital attacks. With the increasing reliance on technology and data in sectors such as finance, healthcare, and governance, the importance of cybersecurity has never been more significant.

Cybersecurity, in essence, involves protecting systems, networks, and programs from digital attacks aimed at accessing, changing, or destroying sensitive information, interrupting normal business operations, or extorting money from users. With advancements in AI and Machine Learning, cybersecurity technologies are getting smarter, responding to threats more quickly and accurately.

AI and ML in cybersecurity help in threat detection, predicting attacks, and responding to incidents. These technologies can learn from data, identify patterns and anomalies, and automate responses to threats more efficiently than traditional, manual cybersecurity measures.

On the other hand, with the rise of IoT, the cybersecurity challenges are increasing. The interconnectedness of devices poses new threats and vulnerabilities as each connected device could potentially be an entry point for hackers. Therefore, robust security measures are required to protect these devices and the valuable data they hold.

In conclusion, cybersecurity is an essential aspect of current and future information technologies. As these technologies continue to develop and change the way we live and work, so too must our cybersecurity strategies evolve to protect our systems and data effectively.

Conclusion

The rapid advancements in new information technologies have profoundly transformed our lives and the world around us. The emergence of AI and Machine Learning has revolutionized industries by providing efficient and personalized solutions. The Internet of Things has connected the world in ways we could not have imagined a few decades ago. Augmented Reality and Virtual Reality offer immersive experiences, changing the way we learn and interact with our surroundings.

Meanwhile, blockchain technology has the potential to revolutionize transactions, ensuring security and transparency like never before. At the same time, the rise of these new technologies underscores the increasing importance of cybersecurity, as the interconnectedness of our world also presents new vulnerabilities and threats.

As we continue to embrace these new information technologies, it’s important to understand their potential and the challenges they present. It is an exciting time to be witnessing this technological revolution, and it will be fascinating to see what the future holds in the field of information technologies.